Maritime Computer Vision Workshop @ CVPR 2026

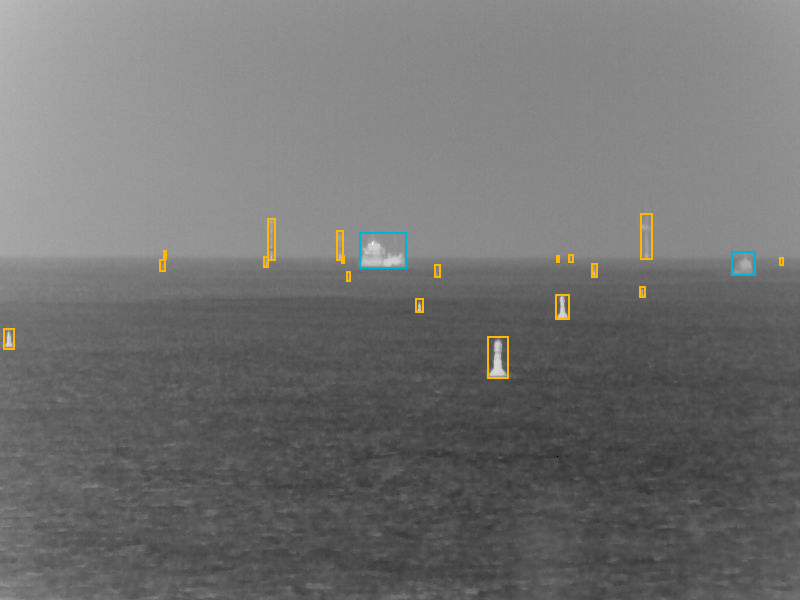

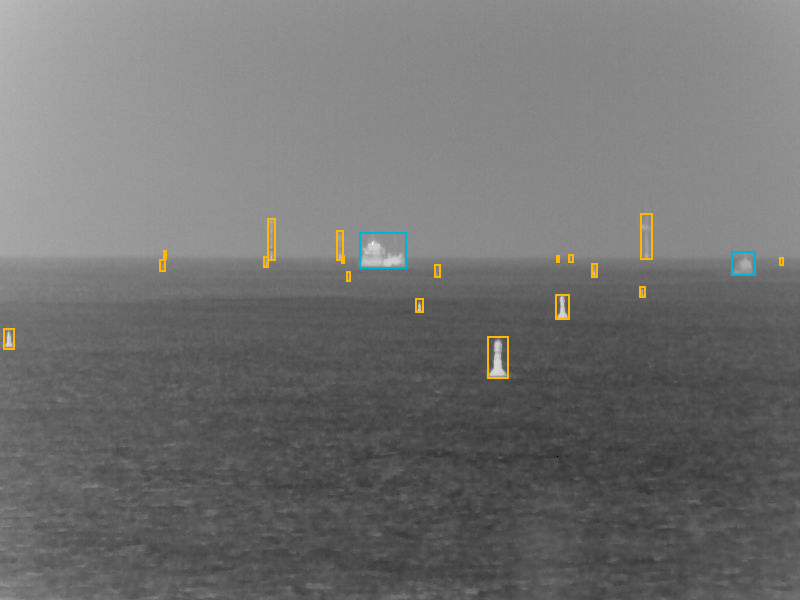

Challenges / Thermal Object Detection

Thermal Object Detection Challenge

Quick links:

Challenge code & data

Submit

Leaderboard

Source dataset paper

Ask for help

Quick Start

- Download the ready-to-use challenge dataset (thermal infrared images).

- Train your detector on the training split and tune it on the validation split.

- Create a COCO-style JSON with predictions for the test set and upload it to the evaluation server.

Prize

The top team will win an NVIDIA RTX 5080 GPU, sponsored by catskill. Check the terms and conditions below.

Overview

Night-time and low-visibility conditions are critical for autonomous and assisted navigation of unmanned surface vehicles (USVs).

This challenge focuses on object detection in thermal infrared (IR) imagery, encouraging methods robust to non-daylight environments.

The challenge is based on the Maritime Collision Avoidance Dataset Germany, English Channel, and The Netherlands

(Gorczak et al.), recorded in German, British, and Dutch waters (2023–2025) and originally annotated using a COLREG-based taxonomy.

For this challenge we use thermal infrared (IR) images only.

Task

Given a thermal infrared (IR) image, predict axis-aligned bounding boxes and a class label for each detection.

The challenge uses two classes:

- Vessel

- Navigational object

Dataset

The challenge dataset is derived from the Maritime Collision Avoidance Dataset Germany, English Channel, and The Netherlands

(Gorczak et al.). We use thermal infrared (IR) images only and keep the original train/validation split.

The new test split is provided as images only (labels withheld) and is used for ranking.

We provide a ready-to-use challenge dataset that already includes all modifications described below. Participants do not need to retrieve the original dataset and apply

preprocessing themselves.

Class mapping

The source dataset follows a COLREG-based taxonomy with five classes. For this challenge we simplify the label space to mitigate class imbalance and ambiguity in thermal IR imagery and enable are robst benchmark:

- Vessel = power-driven vessel + sailboat

- Navigational object = seamark + obstacle

- Dropped: seaplane (extremely rare)

Preprocessing

-

Thermal polarity normalization: some IR images in the source dataset use inverted thermal scaling; we convert all images

so that hotter regions correspond to higher pixel values.

Annotation counts (after merging)

- Train: vessel = 914, navigational object = 1,139

- Validation: vessel = 222, navigational object = 253

Submission format

Participants will run inference on the test images (labels are not provided) and submit a single

COCO-style JSON containing the predicted bounding boxes, class labels, and confidence scores.

The JSON is uploaded to the evaluation server, which computes metrics automatically using the COCO evaluation protocol.

A sample submission file is available in the GitHub repository.

Evaluation Metrics

We evaluate predictions using the commonly used COCO object detection protocol and report:

AP, AP50, AP75, AR1, and AR10.

The determining metric for winning will be AP. In case of a draw, AP50 counts.

Baselines

We provide a baseline model (Faster R-CNN with ResNet-50 backbone pretrained on COCO) and starter code to support participation and reproducibility:

Participate

- Download the challenge dataset.

- Train on the training split and tune hyperparameters on the validation split.

- Generate predictions for the test split and export them as a COCO-style JSON file.

- Upload your JSON to the evaluation server.

- After submission, your results will be evaluated on the server. Please refresh the dashboard page to see results.

The dashboard will also display potential errors in case of failed submissions (hover over the error icon).

Terms and Conditions

- Submissions must be made before the challenge deadline listed on the participation page.

Submissions made after the deadline will not count towards the final results of the challenge.

- Submissions are limited to three per day. Failed submissions do not count towards this limit.

- The winner is determined by the highest AP score (AP50 in case of tie).

- Only one model per team per track counts toward the final ranking. If a team submits multiple models,

only the best-scoring submission is used.

- You are allowed to use additional publicly available data for training, but must disclose it at the time of upload.

This also applies to pre-training.

- Please report the speed of your method (FPS) and indicate the hardware used.

- In order for your method to be considered for the winning positions and included in the results paper, you will

be required to submit a short report describing your method. More information in regards to this will be

released towards the end of the challenge.

- The top 3 teams will be featured as co-authors in the challenge summary paper, which will be included

in the CVPR 2026 Workshop Proceedings and IEEE Xplore.

- Note that we (as organizers) may upload models for this challenge, BUT we do not compete for a winning position.

Our models merely serve as references on the leaderboard.

If you have questions, please use the MaCVi Support forum.